The Harness Moves the Score

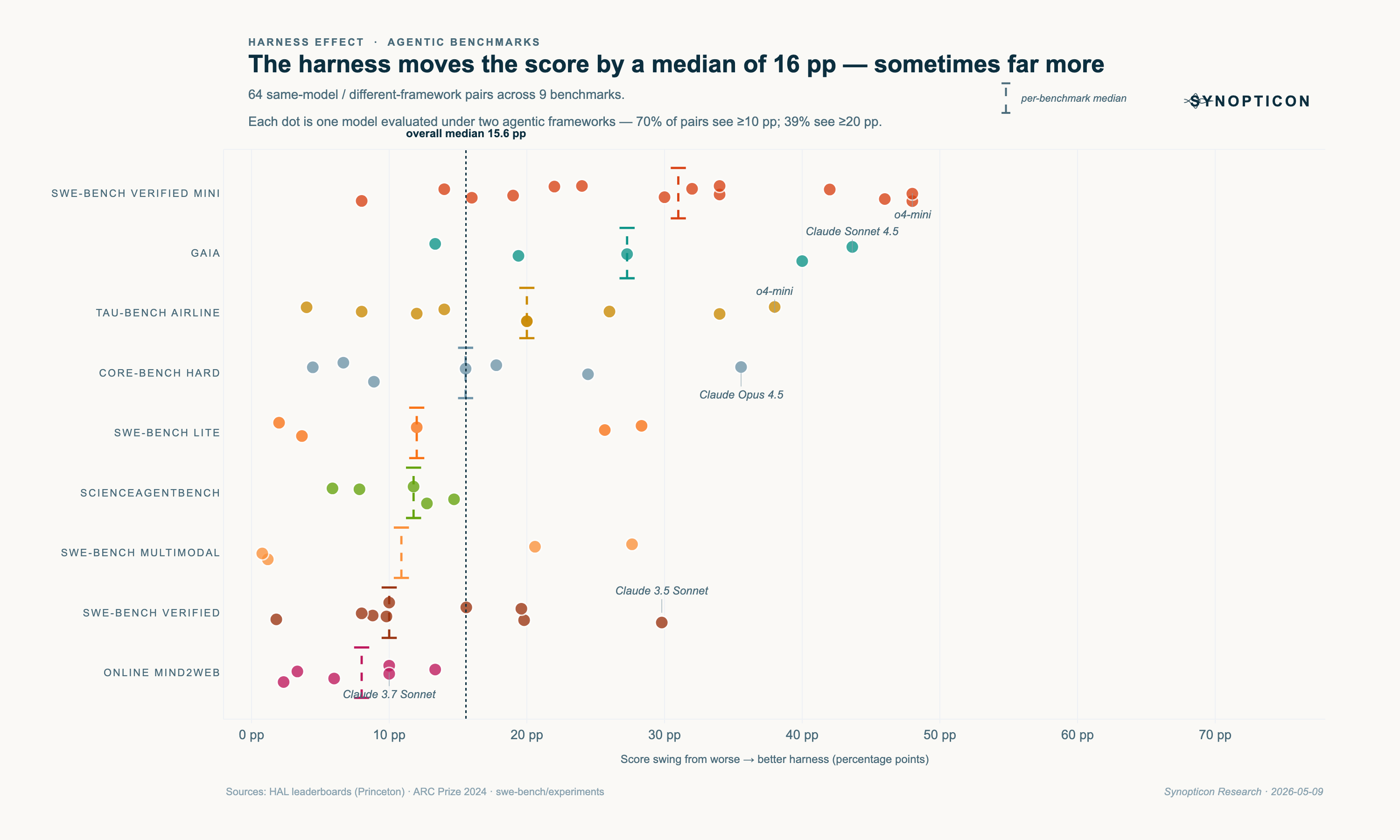

How much of a frontier model's benchmark score is the model, and how much is the wrapper around it? Across 64 same-model, different-wrapper pairs on 9 agentic benchmarks, the median gap is 16 percentage points.

Sam Donahue · May 11, 2026

across 9 benchmarks

(score swing)

(o4-mini, SWE-bench Mini)

Δ ≥ 10 pp

Δ ≥ 20 pp

Where the pairs come from

A pair is one base model run on one benchmark under two different agentic frameworks. SWE-Agent versus Agentless on GPT-4o. HAL Generalist versus Claude Code on Claude Opus 4.5. HF Open Deep Research versus HAL Generalist on Claude Sonnet 4.5. Same model, different wrapper, different score. The gap belongs to the wrapper.

Most pairs come from HAL, Princeton's Holistic Agent Leaderboard. HAL runs each model under multiple frameworks on standardised infrastructure across six benchmarks: GAIA (web research), SWE-bench Verified Mini (coding), CORE-Bench Hard (scientific reproducibility), TAU-bench Airline (customer service), Online Mind2Web (web tasks), and ScienceAgentBench. Standardised infrastructure makes the harness Δ on HAL unusually clean. The rest come from swe-bench/experiments (Verified, Lite, Multimodal) and the ARC Prize board.

How big is it, and where?

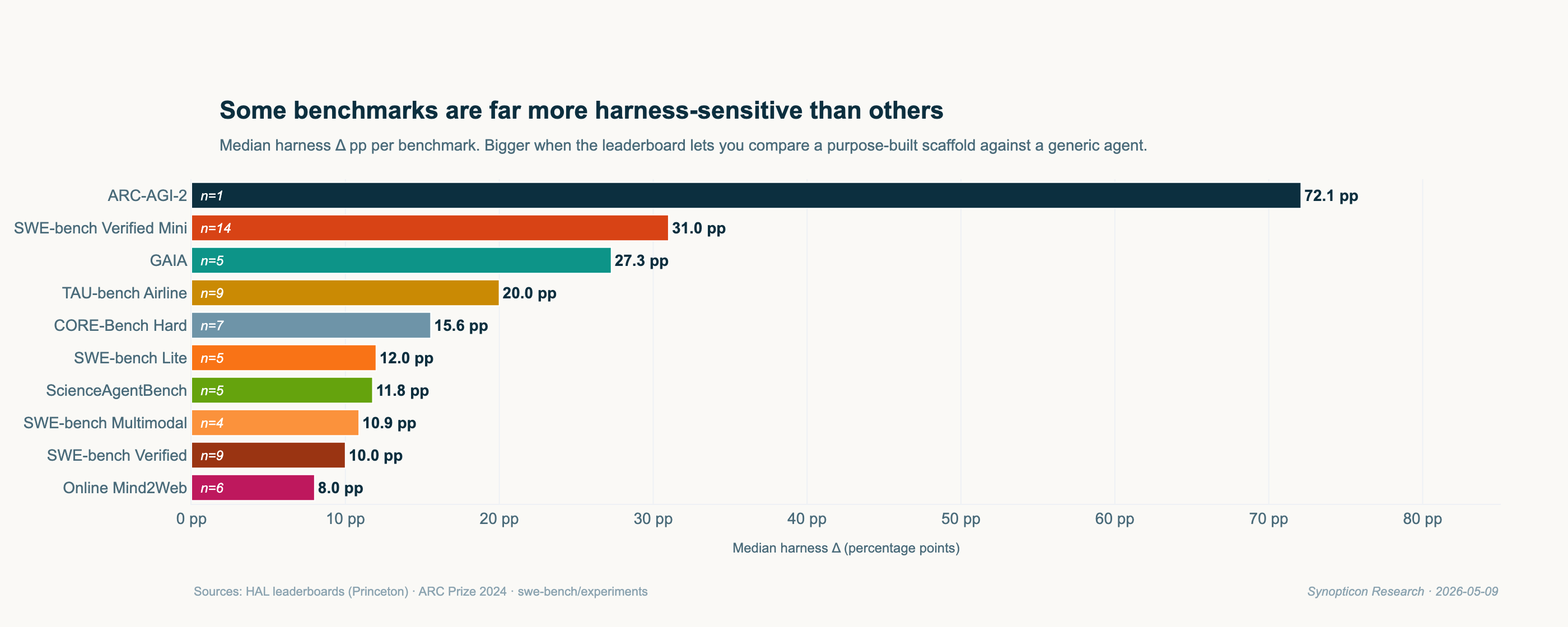

The aggregate median is 15.6 pp; the per-benchmark spread is wider. Where does the effect concentrate?

Each bar is the median of |delta_pp| across that benchmark's same-model pairs. SWE-bench Verified Mini has the most pairs (n = 14) and the widest spread; Online Mind2Web (n = 6) the narrowest.

Bar order is the first clue to the mechanism. Top benchmarks (Mini, GAIA, TAU-Airline) host both purpose-built scaffolds and generic agents on the same leaderboard. Bottom benchmarks (Online Mind2Web, SWE-bench Verified) host scaffolds that have converged. The harness effect is large when the leaderboard is unsettled and shrinks as design space converges.

Why the gap exists

A purpose-built scaffold encodes three things a generic agent does not. A tool surface fitted to the benchmark: file-edit and pytest tools for code, DOM and click tools for web. An agent loop tuned to that task class's failure modes: re-plan after a failed test, re-locate after a navigation change. And a system prompt that already knows what the eval looks like.

A generic agent has none of that. It has to discover the right tool, the right loop, and the right framing inside the eval. The harness Δ is the cost of that discovery. Web tasks have converged on Browser-Use and SeeAct, so the gap is small. Code tasks have not: SWE-Agent, Agentless, OpenHands, ACoder, and Refact.ai encode different bets, and those bets produce different scores.

Specialised vs generic: do scaffolds beat agents?

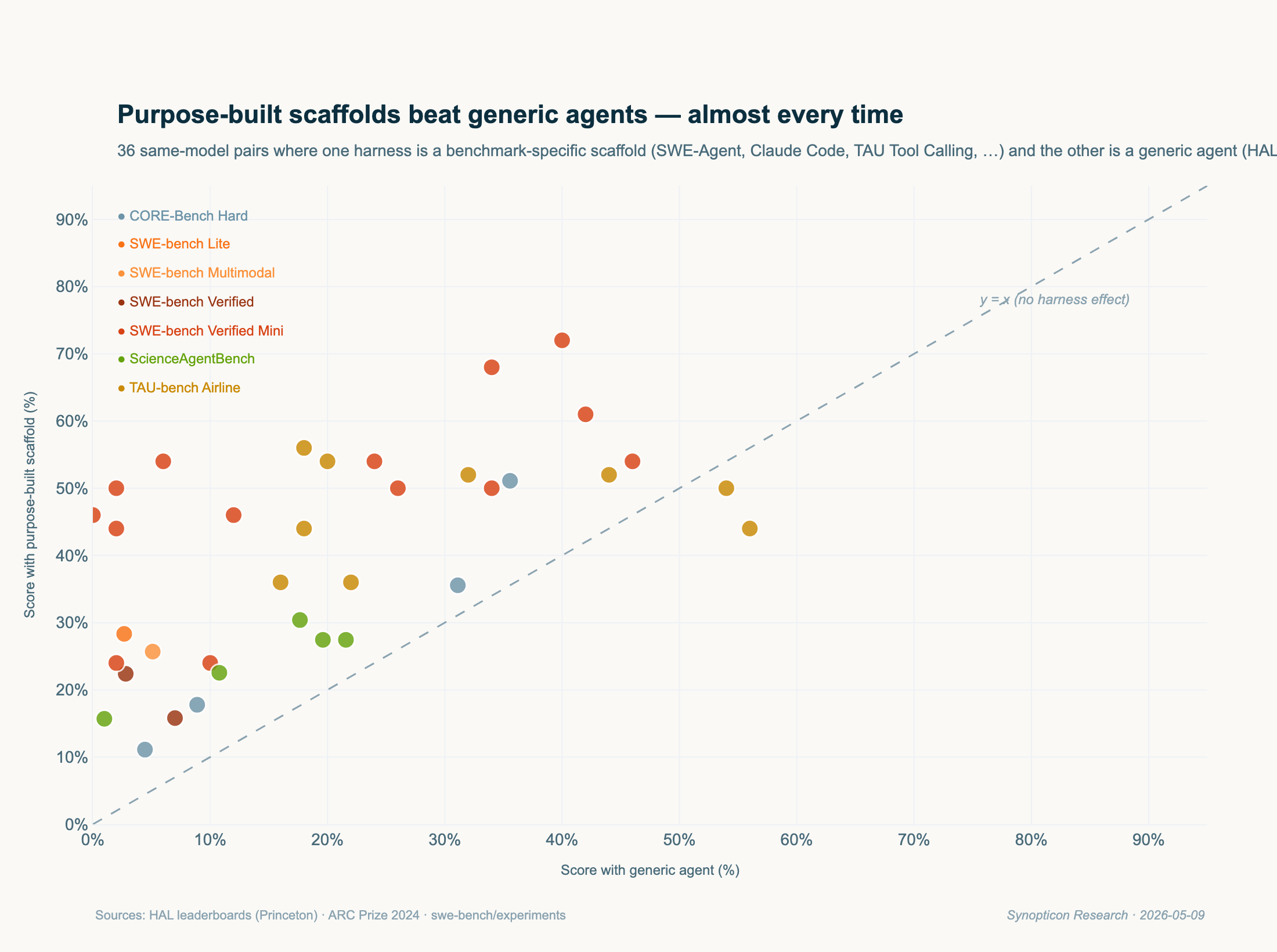

If specialised scaffolds reliably beat generic agents, every dot should sit above the y = x diagonal.

The subset is 36 pairs where one side is benchmark-specific (SWE-Agent, TAU-bench Tool Calling, Claude Code, CORE-Agent, SAB Self-Debug) and the other is generic (HAL Generalist, HF Open Deep Research, RAG, direct prompting). Pairs where both sides are specialised or both generic are excluded; they would not test the question.

On the benchmarks where the comparison is possible, the harness layer accounts for 30–50% of the score.

Both on-diagonal dots are Online Mind2Web, the most converged benchmark. Where design space is still open (code, customer service, scientific reproducibility), the specialised wrapper does 30–50% of the work. A model-only baseline does not represent what the same model can do under a real scaffold.

Does owning both layers pay off?

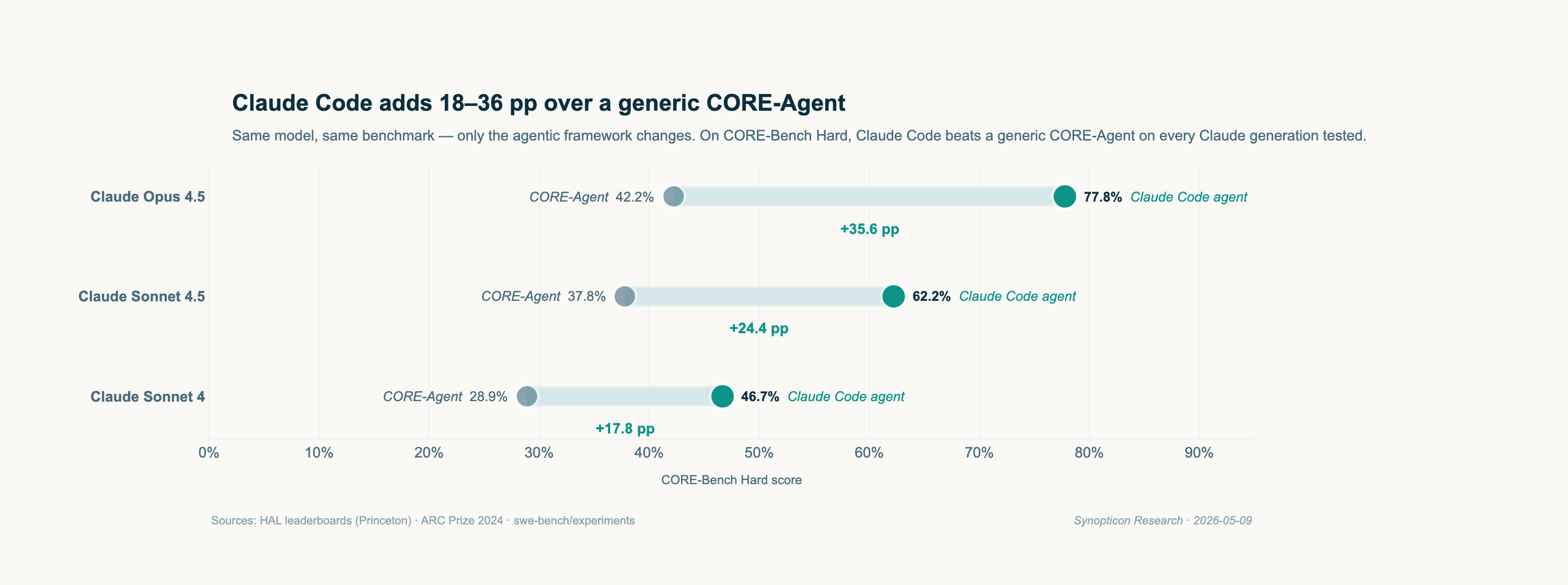

Anthropic builds both Claude (the model) and Claude Code (the agent). If integration is a real moat, the same Claude model should beat itself under a third-party wrapper. Does it?

CORE-Bench Hard (scientific code reproducibility) is the only public benchmark that runs the same Claude model under both Anthropic's own agent (Claude Code) and a third-party baseline (CORE-Agent). HAL has done this for three Claude generations. Claude Code wins by 18–36 pp every time.

CORE-Bench Hard (scientific code reproducibility, 45 tasks) is the only public benchmark that runs the same Claude model under both Claude Code and a third-party generic baseline. HAL has done this for three Claude generations. The premium grows with the model: Opus 4.5 gains 35.6 pp, Sonnet 4 gains 17.8 pp. Newer Claude generations get more from Claude Code, not less.

OpenAI's Codex CLI has no equivalent same-model benchmark. Gemini has nothing comparable. The Anthropic premium is invisible in raw model benchmarks and worth 18–36 pp at the application layer. SkillsBench reports the same pattern one layer below the harness (see literature section).

Are expensive harnesses worth it?

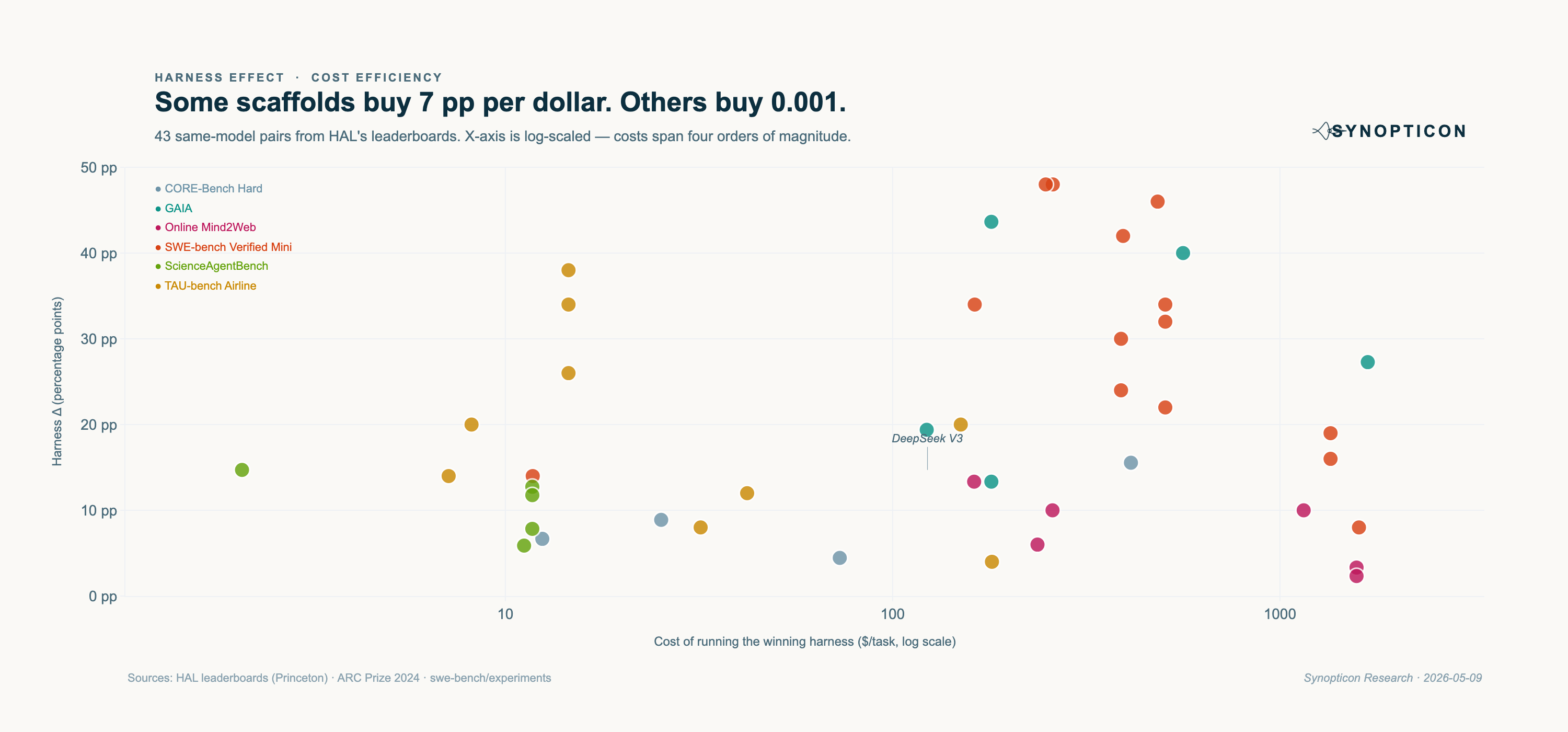

Harness cost spans $1 to $1,600 per task on HAL. If you pay 1,000× more, do you get 1,000× more Δ?

COST (USD) column. 43 of 64 pairs have published cost for both harnesses.If price predicted performance we would see a clear upward trend. We see scatter. The most efficient pair is DeepSeek V3 on ScienceAgentBench: +14.7 pp for $2.09 per task, or 7.0 pp per dollar. The least efficient is Browser-Use on Online Mind2Web with Claude models: $1,150–$1,577 per task for 2–10 pp.

Five of the top six pairs by pp-per-dollar are TAU-bench Tool Calling vs HAL Generalist Agent on customer-service tasks. Design choices that score higher also cost less, the opposite of what catalog pricing would predict. Specialisation wins twice: quality and price.

Is the effect fading as models get smarter or scaffolds mature?

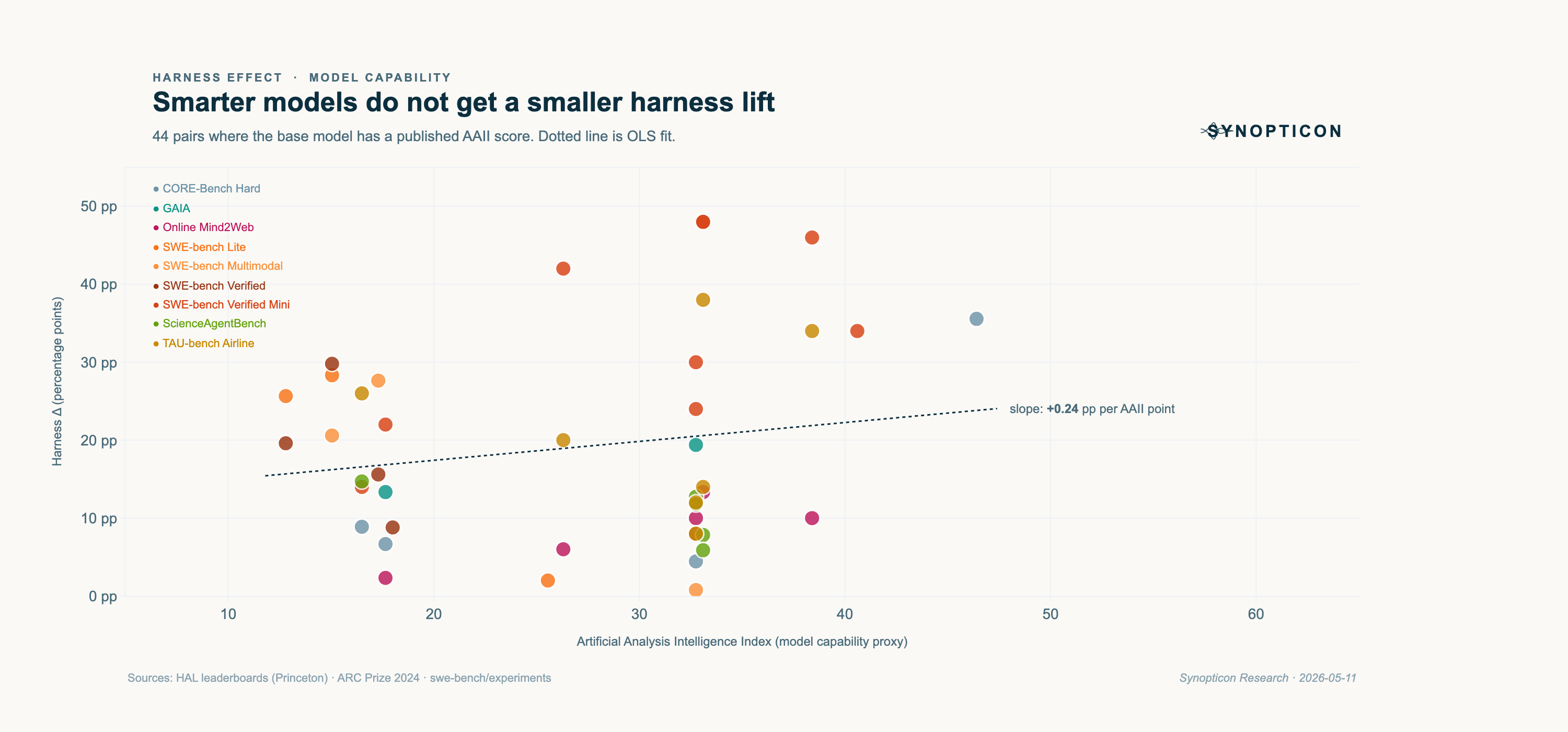

Two natural objections to the thesis. (a) Frontier models will internalise the work scaffolds do, so smarter models should need them less. (b) Scaffold design is maturing, so newer scaffolds should be closing the headroom on base models. Both predict a shrinking harness Δ. Both predict the wrong sign.

AAII is Artificial Analysis's composite index across reasoning, coding, math, and tool-use evals: a single number scoring frontier models from ~10 (GPT-4o-mini class) to ~60 (GPT-5.5 Pro class). We matched 44 of 64 pairs directly. The 20 unmatched are mostly older Claude 4 generations, now superseded.

If smarter models needed less help, we would see a negative slope. We see a faintly positive one. The harness premium is invariant to model capability over the range we sampled. A GPT-5.5-class model under the right scaffold beats itself under the wrong one by the same margin a Claude 3-class model would.

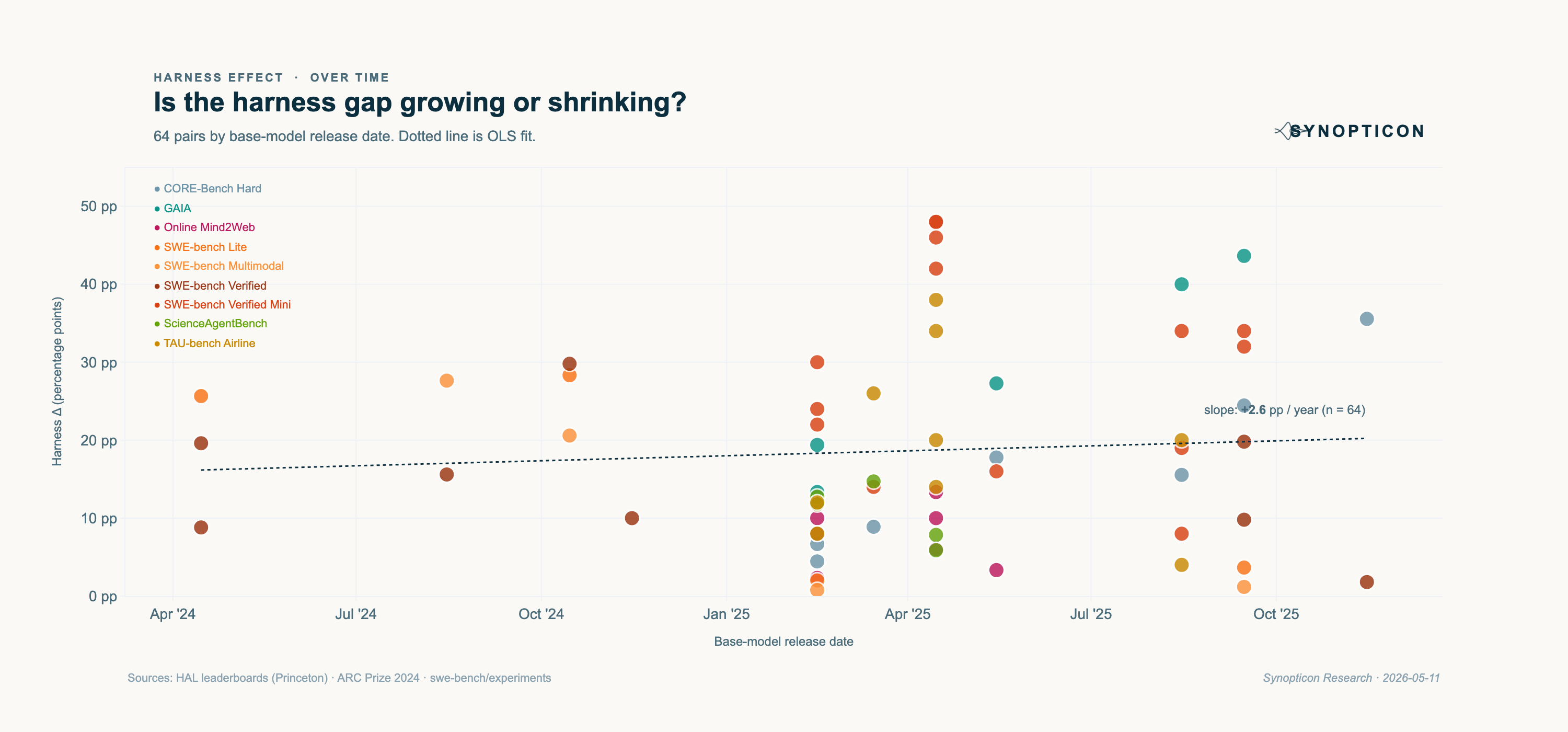

Each dot is anchored to its base model's release date, not the harness submission date. Data spans Mar 2023 (GPT-4 1106) to Oct 2025 (Claude Sonnet 4.5). Newer models are over-represented because they have more leaderboard submissions.

The convergence story (scaffolds hit diminishing returns as base models improve) predicts a falling slope. We get a rising one. Scaffold research is outpacing model research on agentic tasks. Caveat: most new scaffolds in our dataset (SWE-Agent, OpenHands, Refact.ai, EPAM AI/Run, ACoder) are code-specific, where the design space is widest. Whether the trend holds outside code is the open question.

Implications

Five claims follow from the data above. Each is anchored to a specific pair. None is a recommendation.

The harness layer is undercapitalised relative to its share of score.

Model labs have raised more than $200B cumulatively. Harness-layer companies have raised a small fraction of that. The harness accounts for 30–50% of score on agentic benchmarks where the comparison is possible.

Anthropic owns both layers; no competitor has a comparable public showing.

Claude Code beats a generic CORE-Agent by 18–36 pp on every Claude generation tested. OpenAI's Codex CLI has no equivalent same-model benchmark. Model-only leaderboards miss this.

Code scaffolds are crowded; other verticals have neither a benchmark nor a winner.

Cursor is at ~$9B, Cognition at ~$4B. Legal, clinical, finance, and accounting agents have no public same-model leaderboard because the benchmark does not exist yet.

Pure-API providers without a harness story are commoditised.

If a third-party harness explains 30%+ of the score and anyone can buy it, the model API is a commodity input. Cohere, Together, and Mistral La Plateforme are the most exposed. OpenRouter and Portkey are picks-and-shovels on the same trend.

Open-source repos lead the harness market by months.

The frameworks at the top of SWE-bench today (SWE-Agent, OpenHands, Aider, Browser-Use) are open-source. Star and contributor velocity on those repos lead enterprise adoption. Synopticon's GitHub feed tracks them.

In the literature

Three recent papers measure the same phenomenon at different layers of the agent stack. Together they corroborate our finding, sharpen one of the implications, and quantify a limitation.

The convergent finding

A skill is not a harness. A harness wraps the model and runs the agent loop. A skill is a composable module (a markdown file plus optional scripts) that the harness loads at runtime to specialise for a task. The harness sits one layer above the model; the skill sits one layer below the harness.

SkillsBench (Feb 2026) measures the lift from curated skills across seven model × harness configurations on 84 tasks across 11 domains. Their average lift is +16.2 pp. Our dataset reports a median +15.6 pp at the layer above. Two independent groups, two layers apart, the same magnitude.

| Layer | What it adds | Paper | Effect |

|---|---|---|---|

| Harness | Agent loop that wraps the model | This piece (64 pairs) | +15.6 pp median |

| Skill | Composable module the harness loads at runtime | SkillsBench (7 configs × 84 tasks) | +16.2 pp average |

The agent stack compounds. Each layer contributes roughly 15 pp on top of the model on the kinds of tasks both papers measure.

Anthropic's edge extends down a layer

SkillsBench reports that Claude Code is the only commercial harness that reliably uses curated skills. Codex CLI, in their words, "frequently neglects provided Skills": agents acknowledge skill content but implement solutions independently. This sharpens Thesis 02. The Anthropic premium is two layers deep: the harness wins on integration with the model, and consumes the skill layer below it more effectively than competitors.

SoK: Agentic Skills (Feb 2026) adds two pieces of nuance to the skill-layer picture. First, self-generated skills (skills an agent writes for itself) degrade performance by an average −1.3 pp. Curation matters. Second, per-domain variance is large: healthcare skills add +51.9 pp, manufacturing +41.9 pp, software engineering only +4.5 pp. The harness Δ also varies by benchmark (our Online Mind2Web median is 8 pp vs SWE-bench Verified Mini's 31 pp). Both layers reward domain-specific design effort.

The counter-finding

SWE-Skills-Bench (Mar 2026) tests whether skills help on real-world software engineering rather than agentic benchmarks. The answer is mostly no. Of 49 curated skills tested on Claude Haiku 4.5 + Claude Code, 39 produced zero pass-rate improvement and the average gain was +1.2%. Three skills hurt performance. The leaderboard Δ shrinks roughly an order of magnitude on in-the-wild tasks.

The reproducibility caveat

OAgents (ICML 2025) dissects agent design components on GAIA and BrowseComp. Their finding: "the lack of a standard evaluation protocol makes previous works, even open-sourced ones, non-reproducible, with significant variance between random runs." Our dataset is a single snapshot per pair. Individual entries carry run-to-run noise. The medians and IQRs in this piece are less noisy than any single row.

Risks to the thesis

Four caveats, in order of how much they should worry you.

- Benchmark gaming. SWE-Skills-Bench (Mar 2026) reports that curated skills add only +1.2 pp on real-world software engineering, against +16.2 pp under SkillsBench's benchmark conditions. The harness Δ likely shrinks an order of magnitude in the wild.

- Selection bias. HAL and SWE-bench leaderboards over-represent submissions optimised for the leaderboard. Production deployments use less-tuned scaffolds. The harness Δ is an upper bound, not an average.

- Distribution can override technical advantage. IDE penetration, default settings, and enterprise sales let model labs absorb harness value back. Microsoft + Copilot is the precedent.

- Eventual convergence. If frontier models internalise agent loops natively, the harness Δ shrinks. o3 and Claude Sonnet 4.5+ trend this way. We have not seen the gap close in 18 months, but it could.

Methodology

A pair is two entries on the same public leaderboard where (1) the base model is identical after normalising version strings, (2) the benchmark is identical, and (3) the agentic framework differs. Reasoning-effort, sample-count, and skill-toggle changes are excluded; they are not framework changes.

Sources: HAL leaderboards (Princeton, six benchmarks, scraped via Playwright); swe-bench/experiments repository (Verified, Lite, Multimodal splits, scored as n_resolved / n_total, then lowest- vs highest-scoring system per model); ARC Prize leaderboard. Cost-efficiency: 43 / 64 pairs where HAL publishes COST (USD) for both harnesses. AAII source: Artificial Analysis snapshot 2026-05-09 (44 / 64 pairs matched).

Numerical notes & what's excluded

Headline numbers are over all 64 pairs: median |Δ| 15.6 pp; mean 18.6 pp; p90 37.3 pp; max 48 pp. 70% of pairs see ≥10 pp, 39% see ≥20 pp.

Run-to-run noise. Each pair is a single snapshot. OAgents (ICML 2025) reports significant variance on the same model + agent + benchmark combination across re-runs. Medians and IQRs in this piece are less noisy than any single row.

Excluded from the dataset. OSWorld (113 entries parsed but no qualifying pairs, since variation comes from step-budget changes); Cybench (single entry per model); Anthropic / OpenAI internal evals (not public); ARC-AGI-2 (only one same-model pair available, dropped to avoid distorting per-benchmark statistics).

Skill vs harness. We measure framework-vs-framework effects at the harness layer. SkillsBench measures skill-vs-no-skill effects one layer below.

Scripts: research/harness-effect/scripts/. Dataset: research/harness-effect/data/harness_pairs.csv.