The AI race, measured

The AI race, told through evidence. Collected at the source, every day.

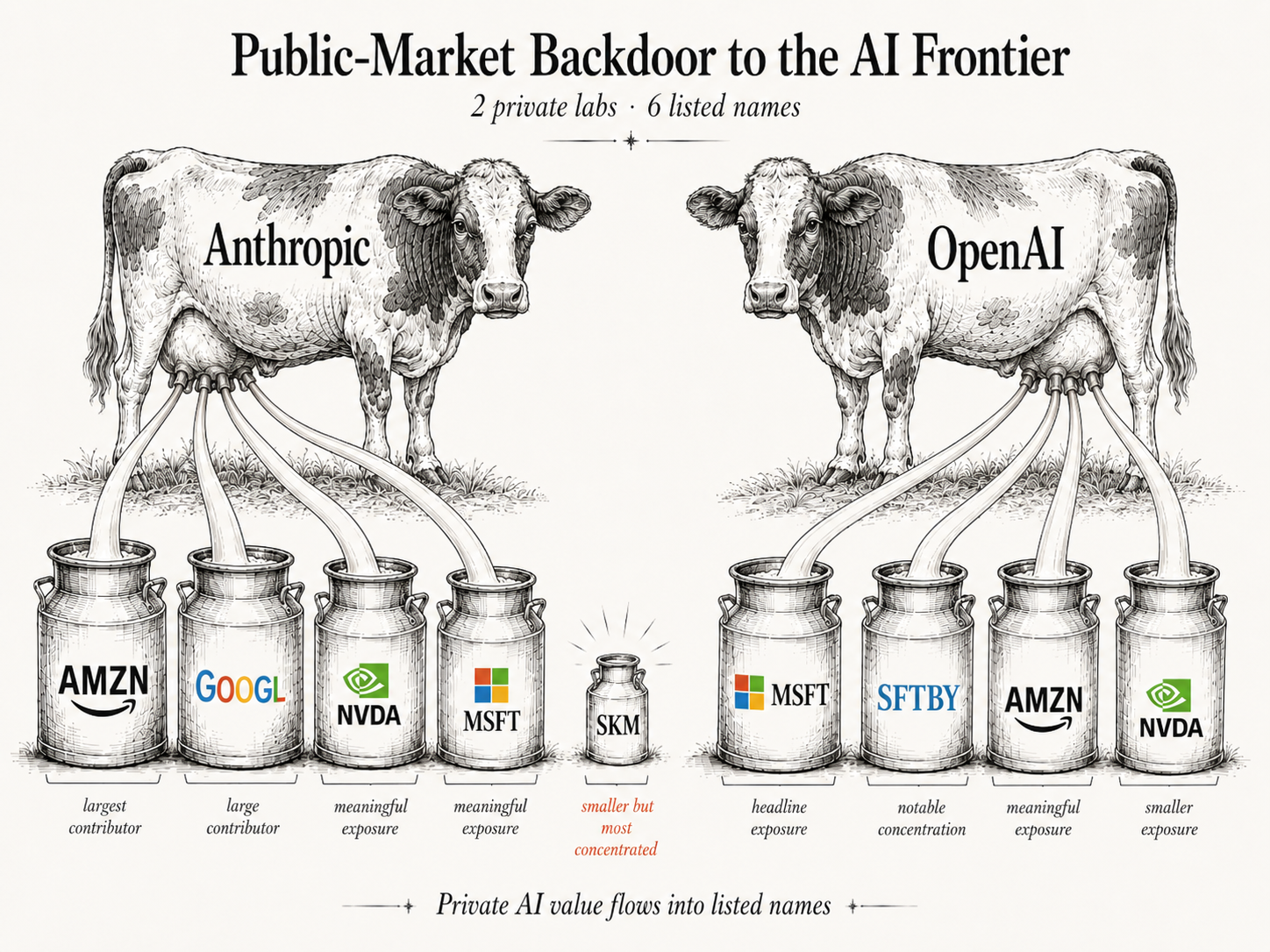

Most of the AI story is told through press releases and announcements. The data underneath — model pricing, token consumption, benchmarks, agent commits, training compute, developer adoption — lives in dozens of incompatible places and changes weekly. There is no canonical source.

We built one. Synopticon collects across the AI ecosystem every day and keeps a continuous record of what's moving in the race. Starting tomorrow, no one could reconstruct the history.

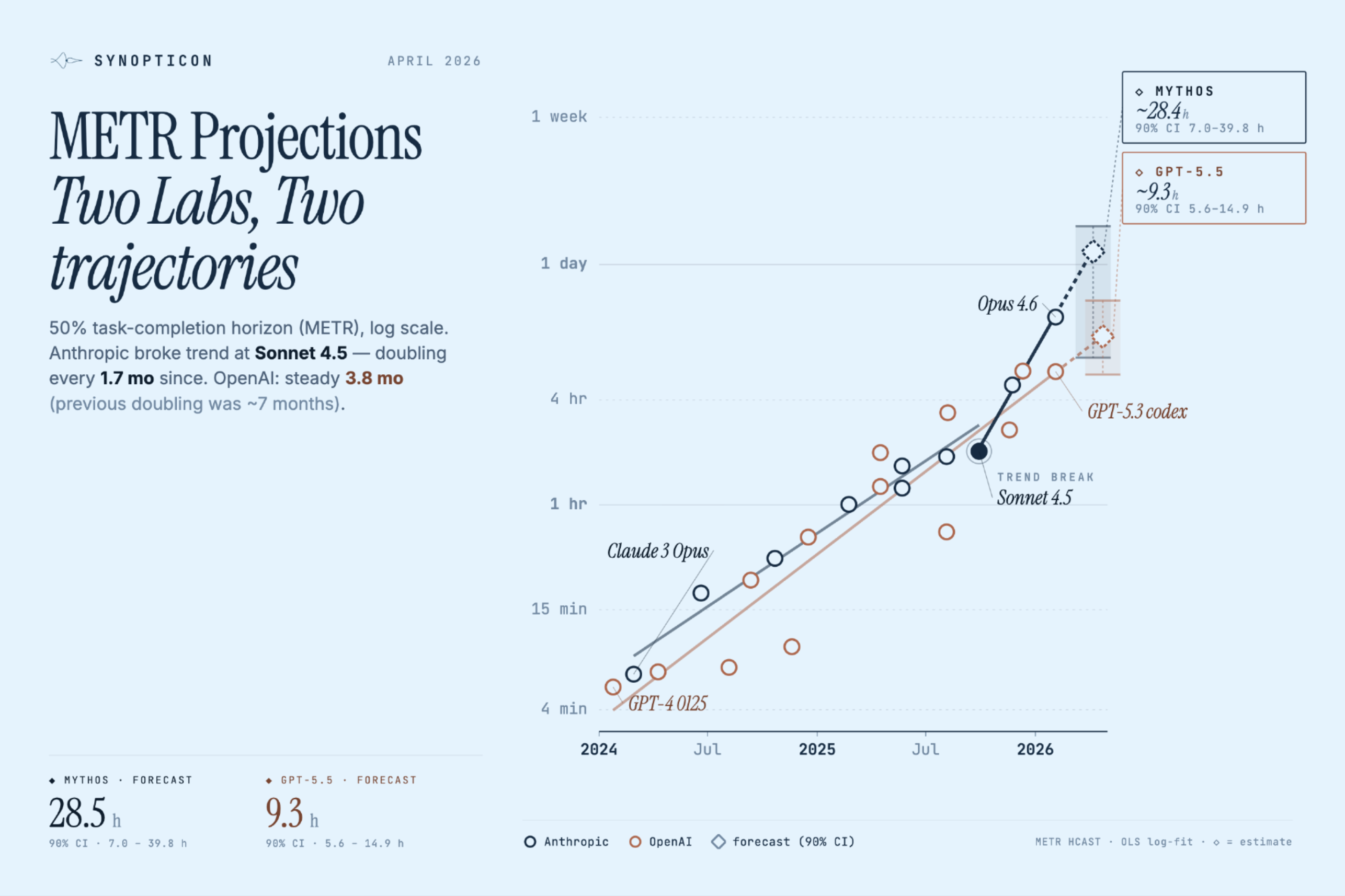

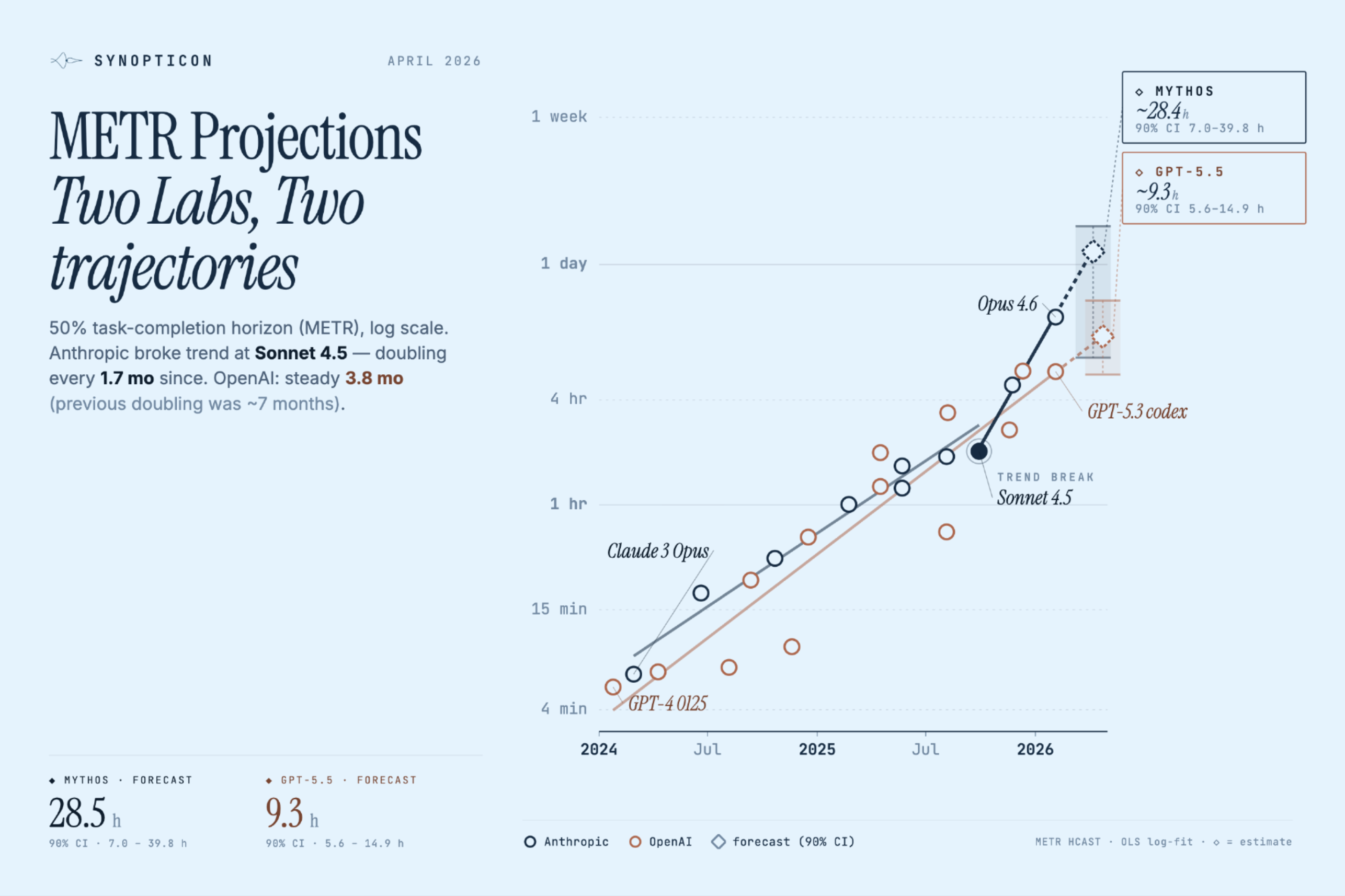

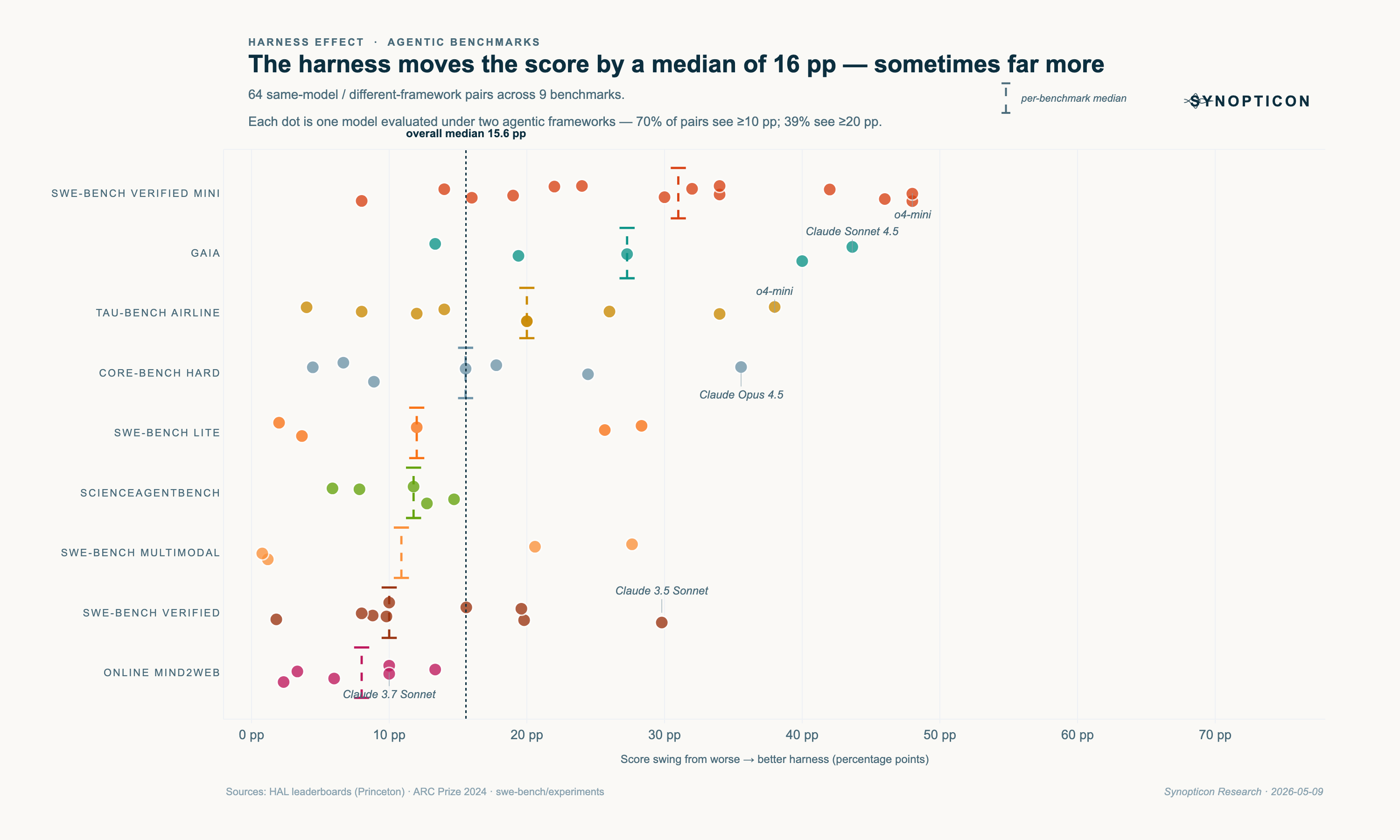

The product is in the triangulation. Anthropic claims one thing, OpenRouter shows another, Portkey shows a third — and the gap between them is usually where the story is. We track those gaps daily and publish what they show, in granular detail: by lab and model, by benchmark category, by enterprise vs. developer routing, by SDK adoption per package. Not "AI capex up 40%." That's the wrong map.

What you get: research investors and operators can act on. Evidence first. No sponsored takes. No claim without a chart.

Data-driven analysis of the AI capability race, delivered every week.